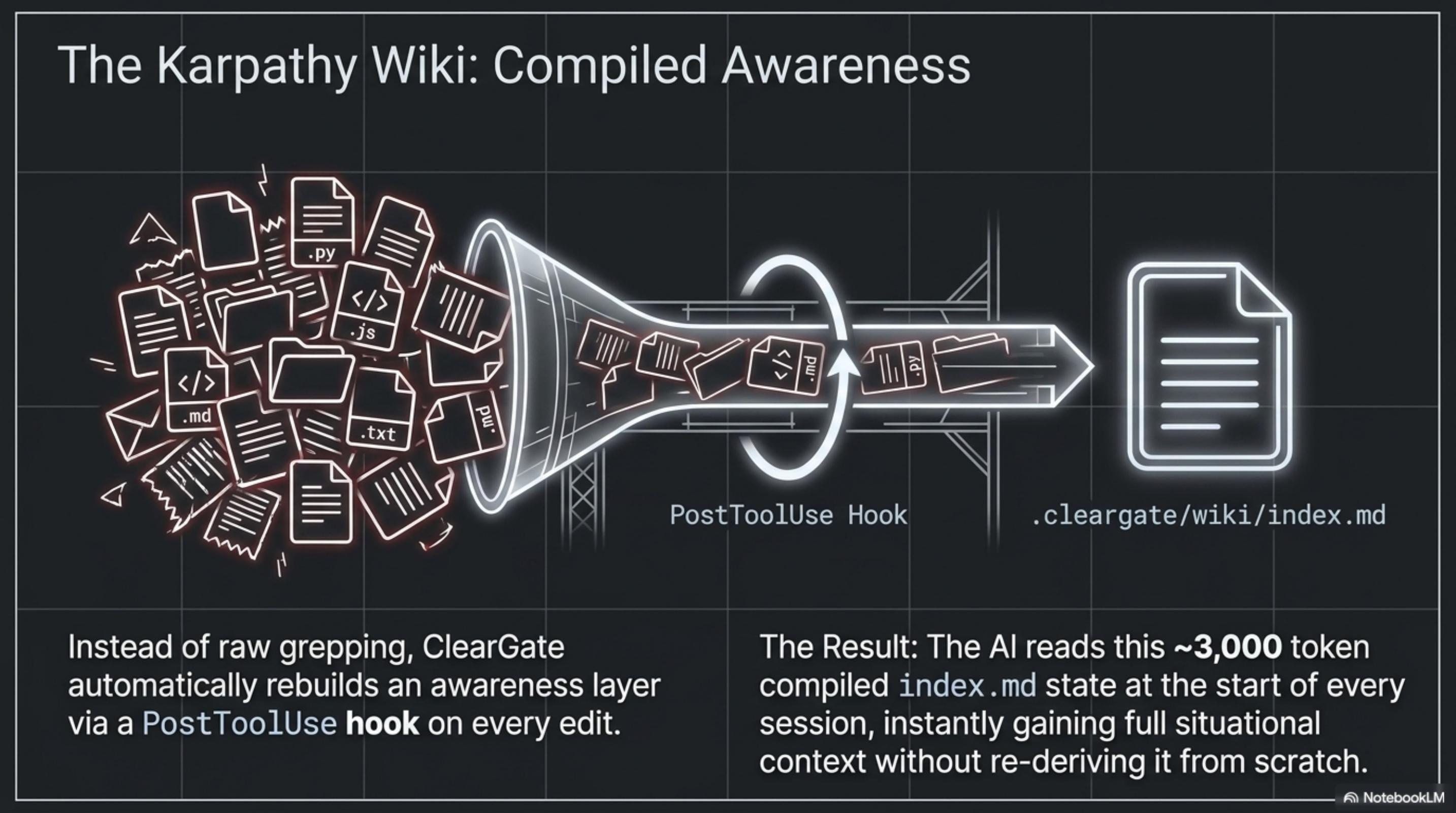

Compiled Awareness (Karpathy Wiki)

A PostToolUse hook rebuilds a ~3k-token index.md on every edit, so every session starts with full situational context instead of blind grepping.

Exadel Code Fest 2026

Three solutions. One thesis: AI is only as good as the structure you give it.

We worked as a single team across all three solutions — no per-product owners. We split daily into pair-and-rotate sessions, met morning and evening to compare notes, and kept one shared thesis pinned: AI is only as good as the structure you give it. Each solution is a different altitude on that idea.

Anyone could (and did) commit to any of the three.

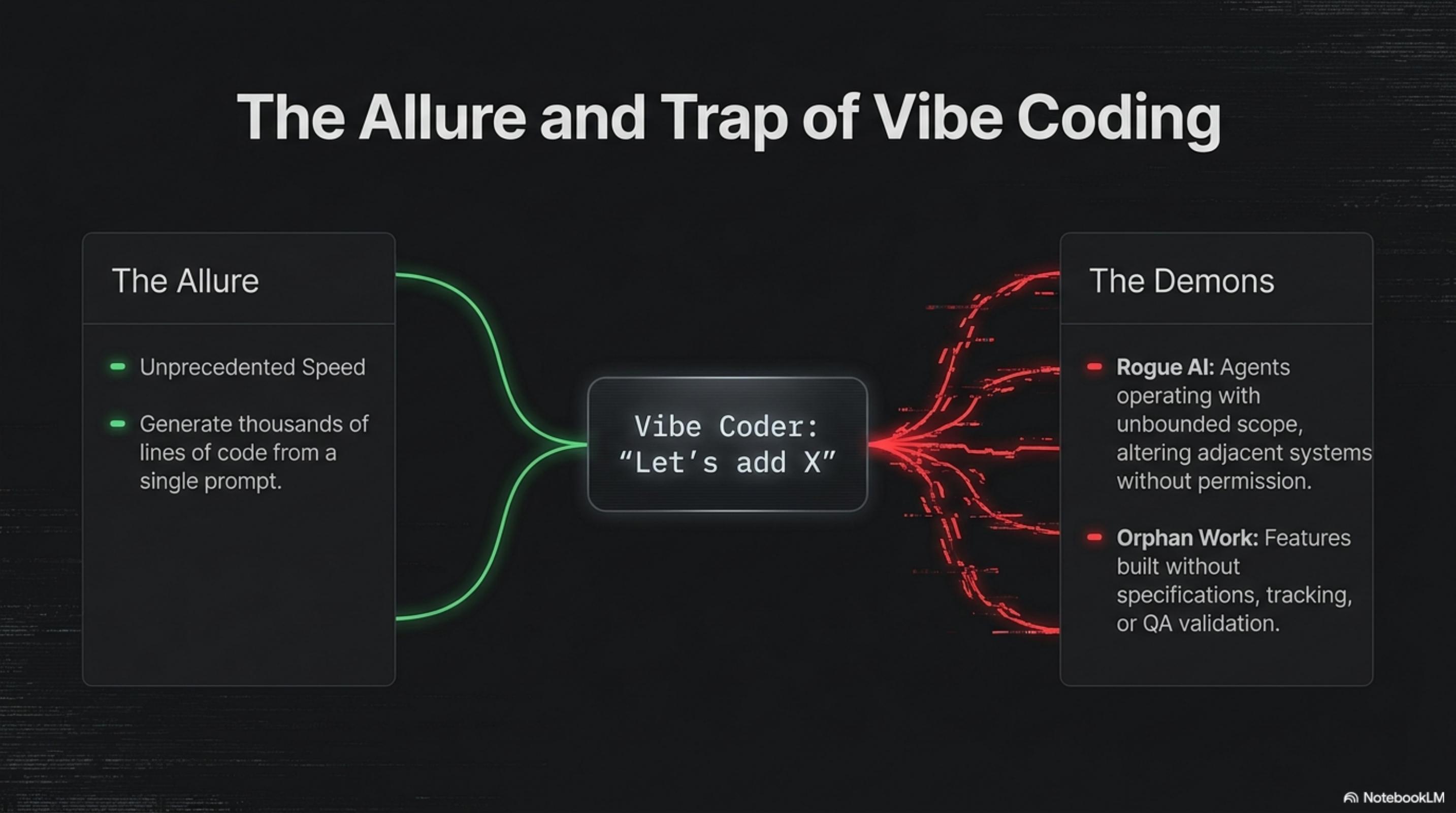

Solution 1 of 3

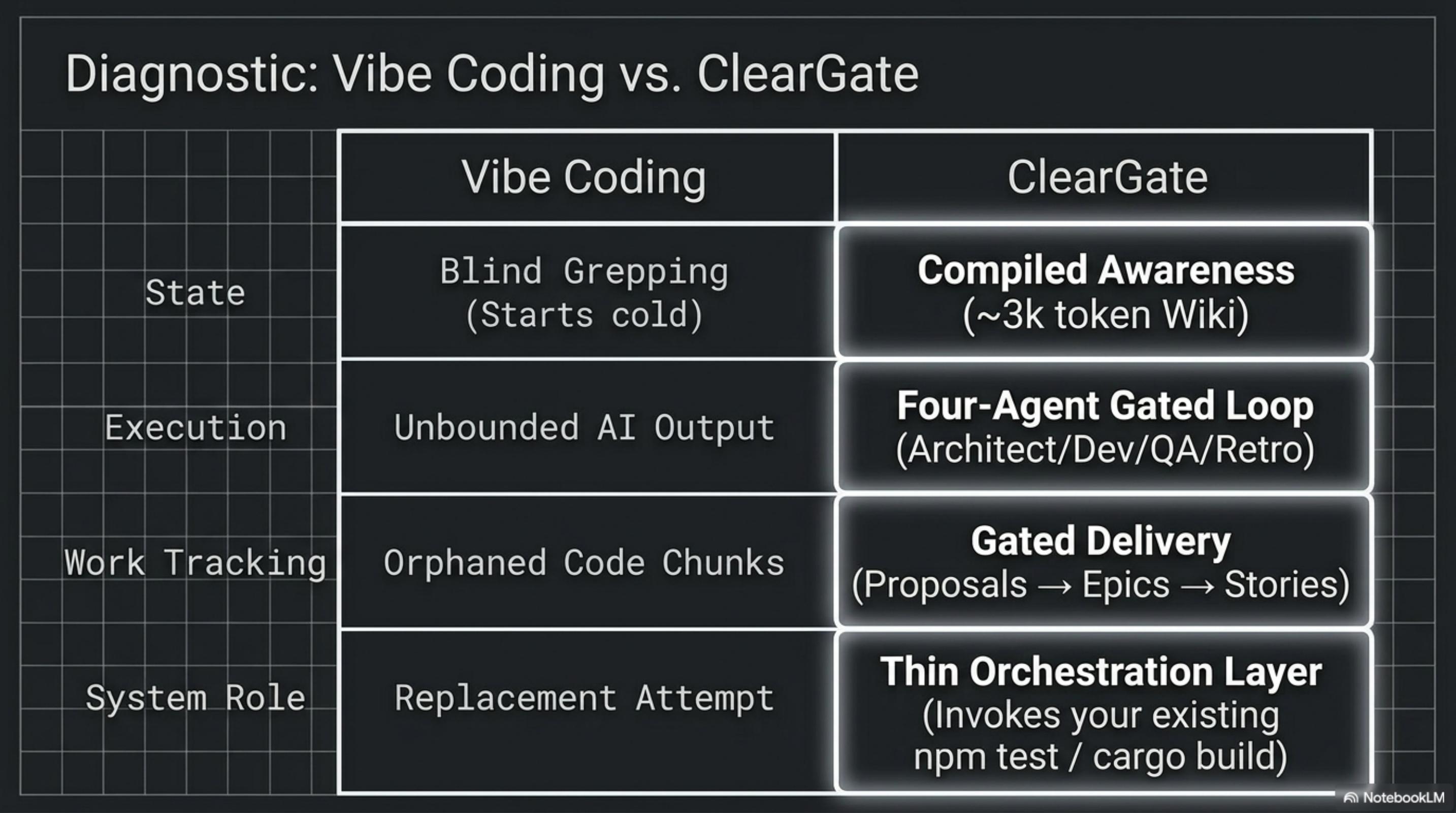

Bridging vibe coding and enterprise delivery.

A PostToolUse hook rebuilds a ~3k-token index.md on every edit, so every session starts with full situational context instead of blind grepping.

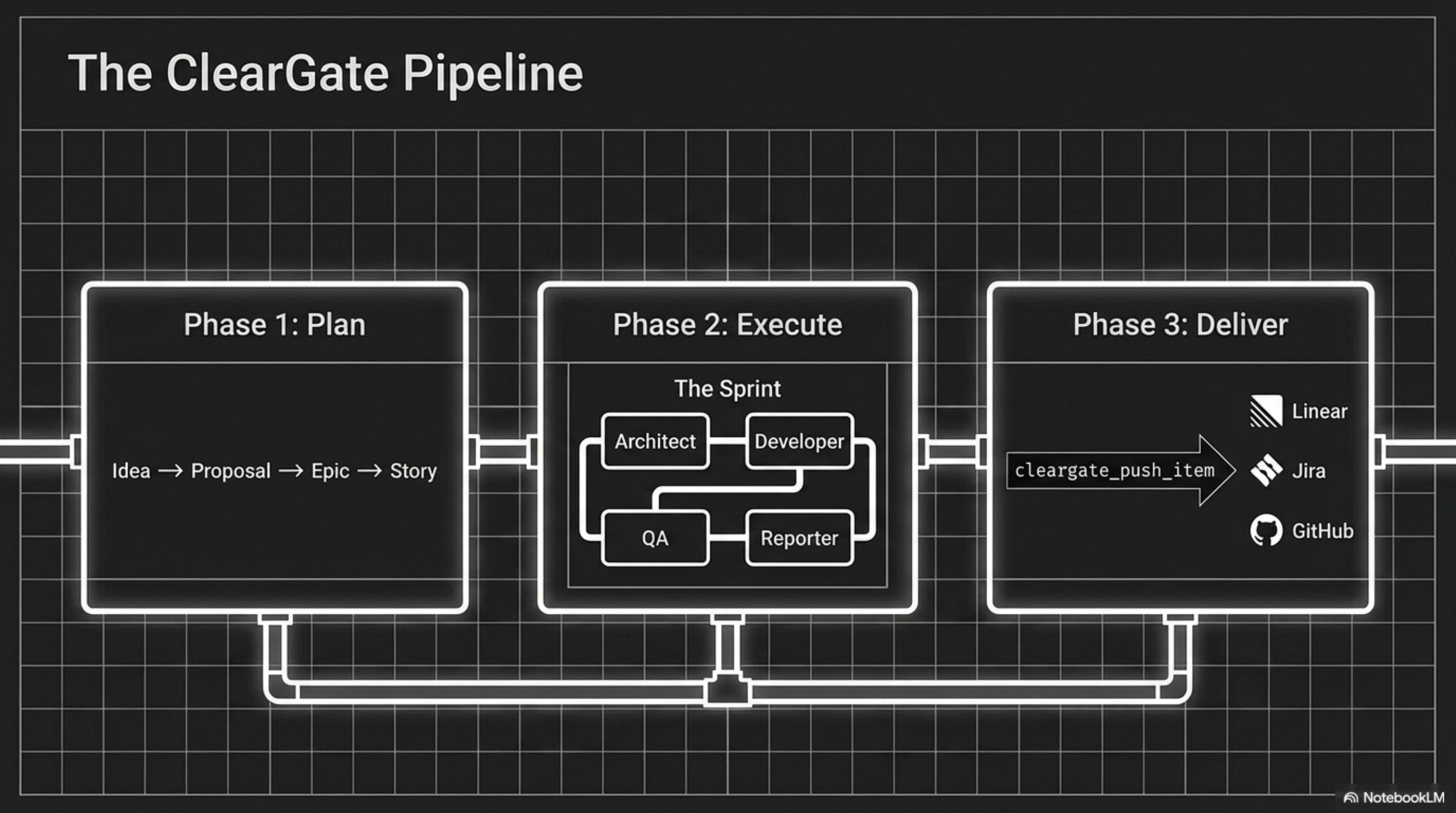

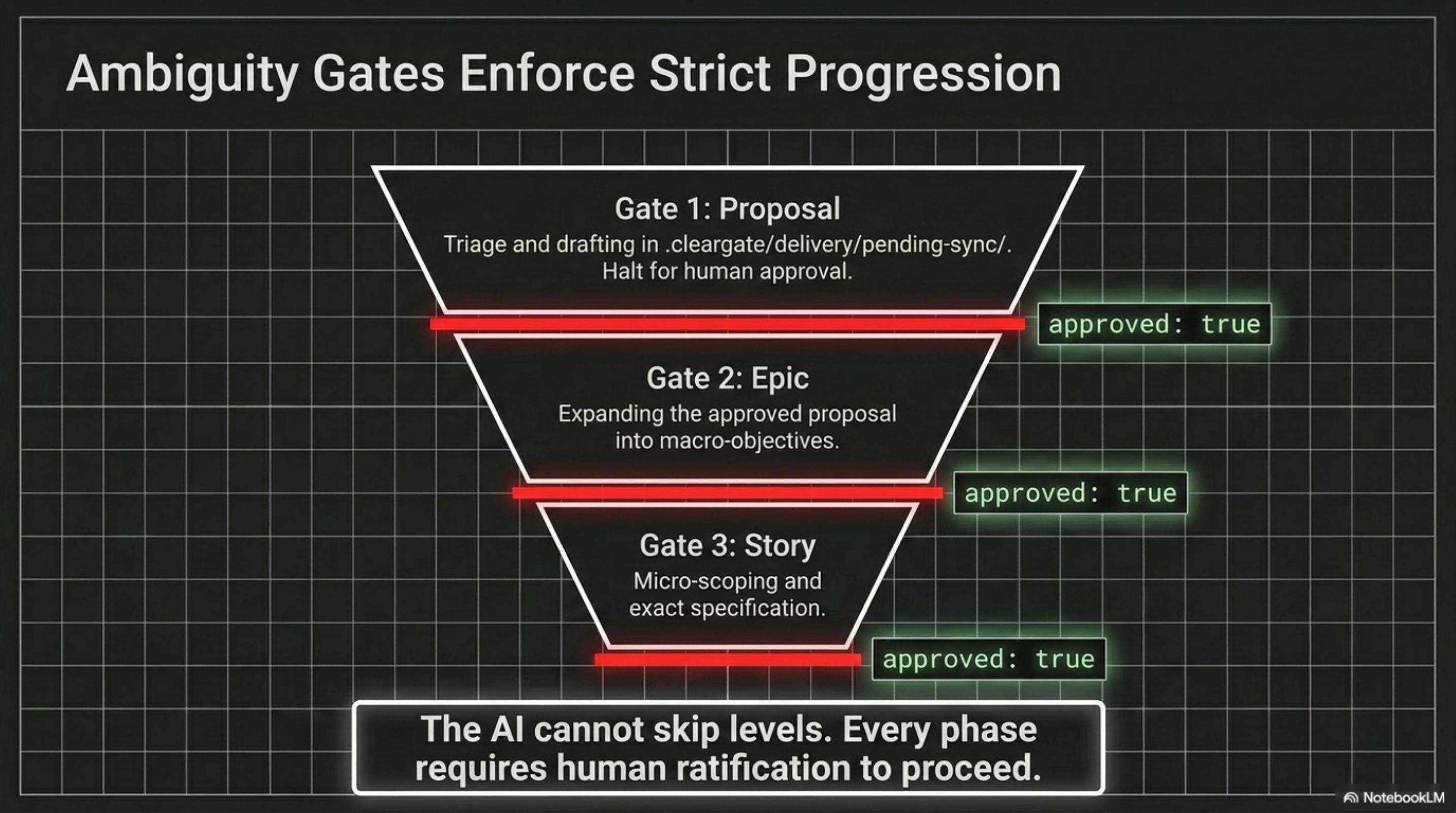

Proposal → Epic → Story. Every phase requires human ratification (approved: true) before the AI can proceed.

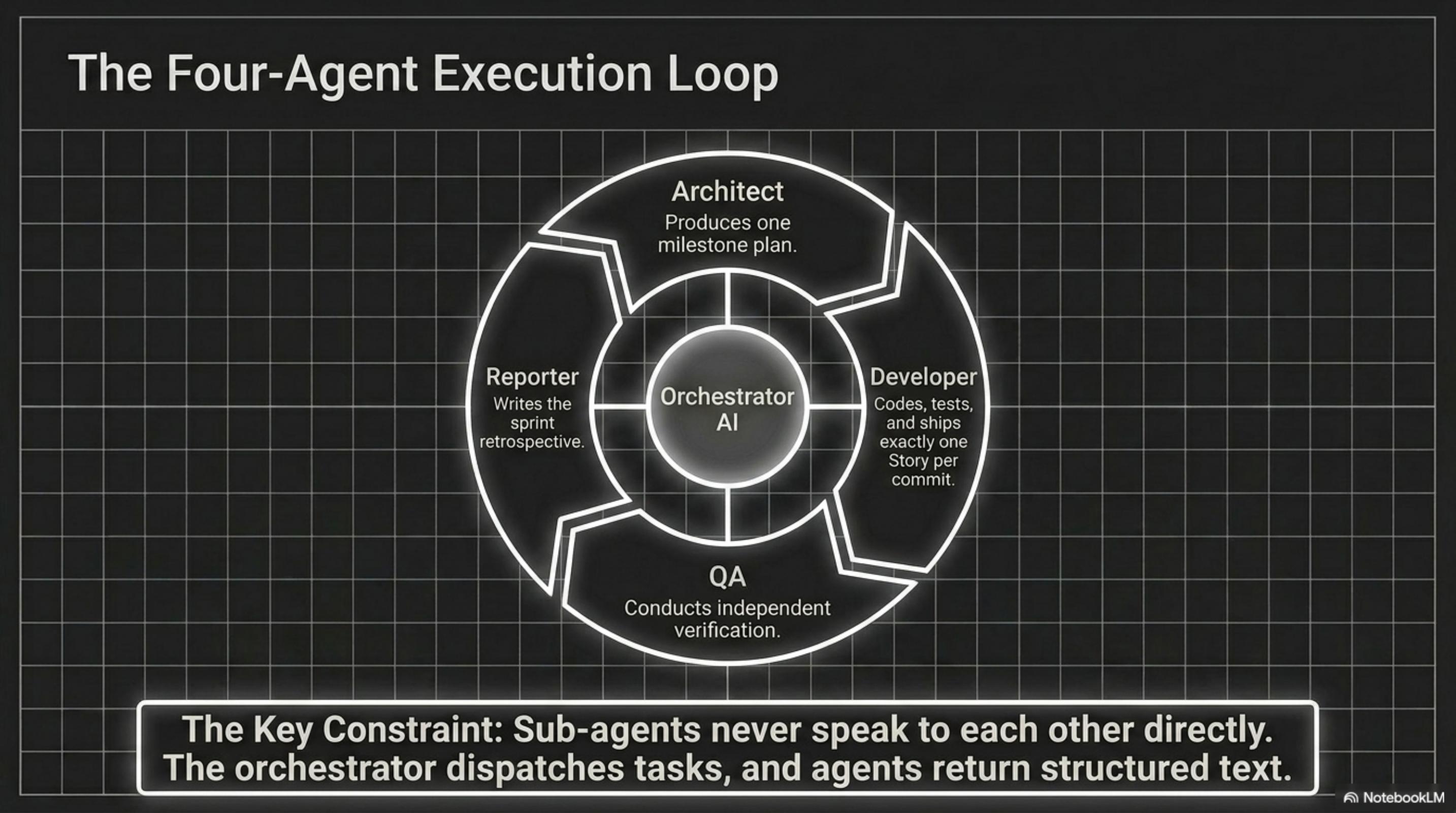

Architect (one milestone plan) → Developer (one story per commit) → QA (independent verification) → Reporter (sprint retrospective). Sub-agents never speak directly.

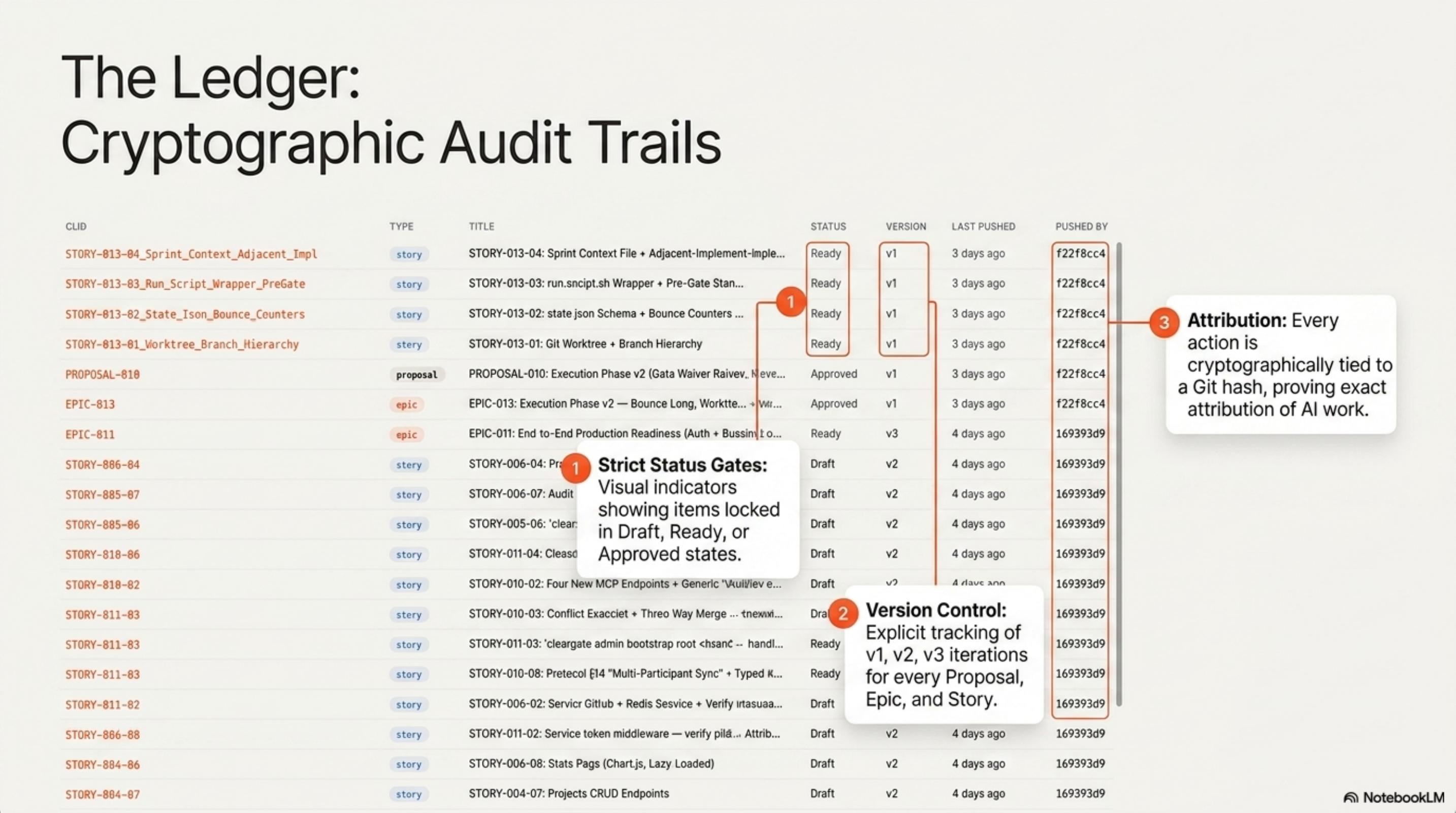

Every Proposal, Epic, and Story is tied to a Git SHA — exact attribution of AI work, with explicit version control on every artifact.

Local .cleargate/delivery/pending-sync/ is the source of truth; once approved, the MCP adapter pushes ratified Epics and Stories into your existing PM stack.

Solution 2 of 3

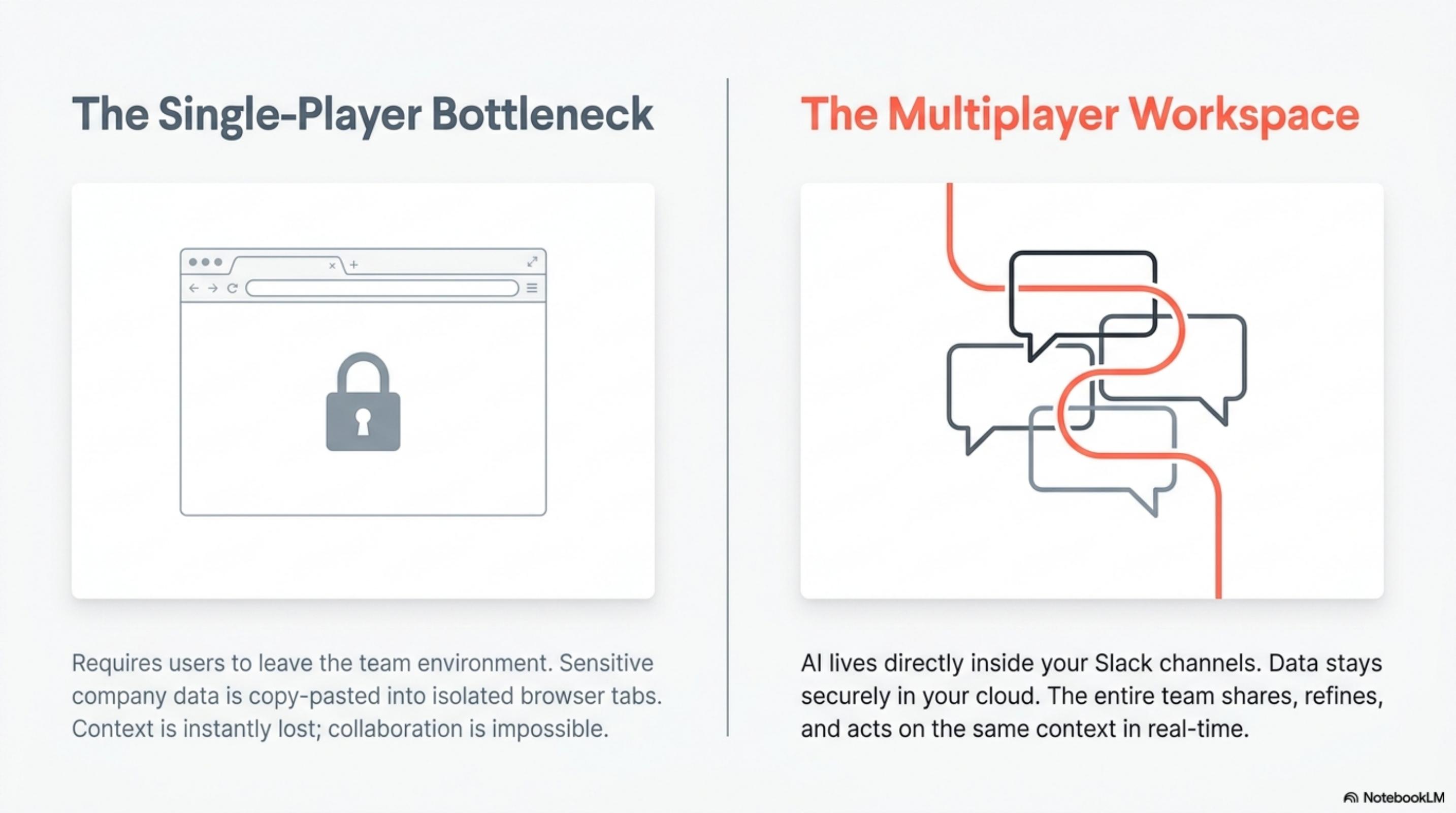

Secure, Slack-native collaborative AI for the modern workspace.

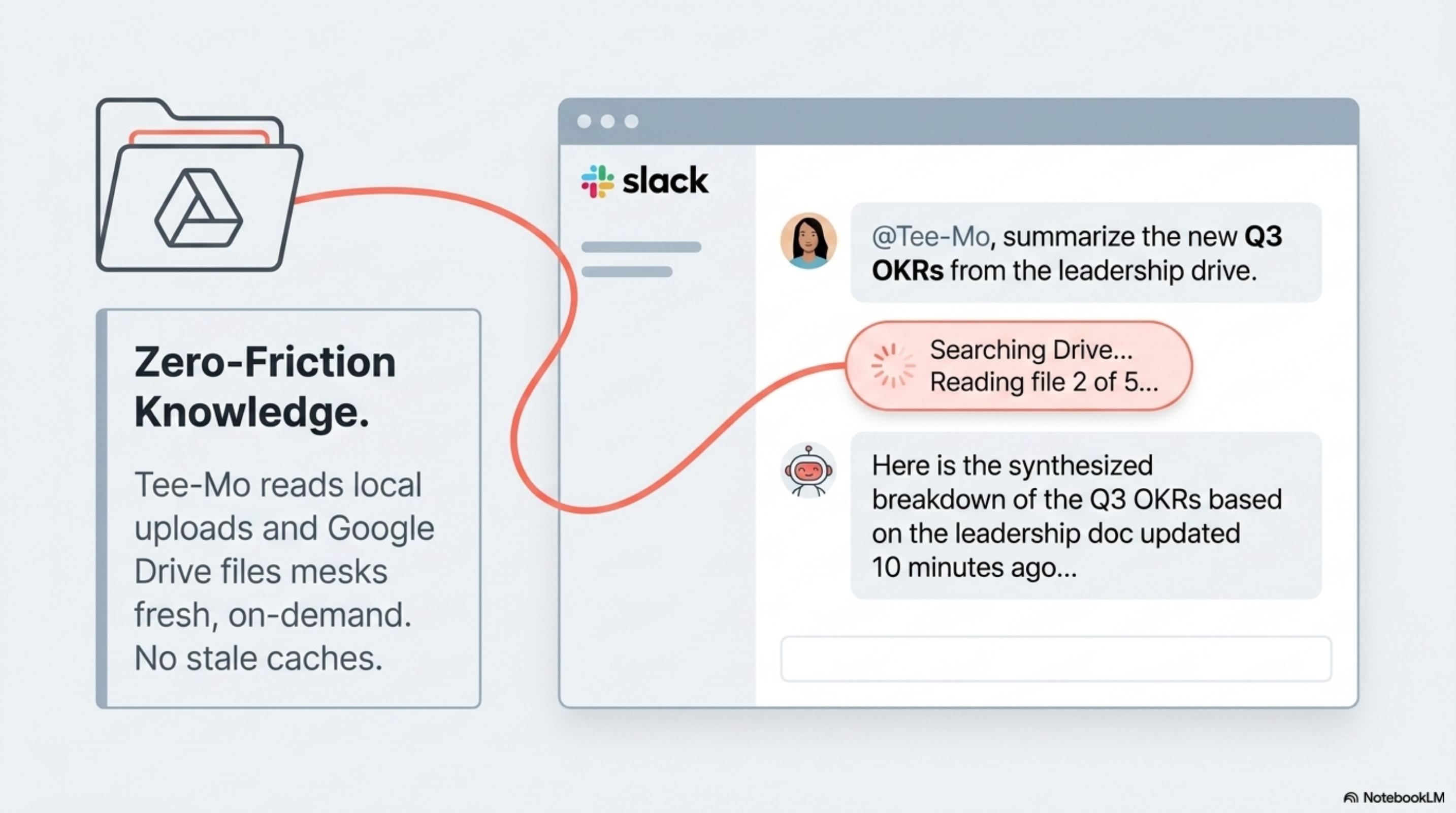

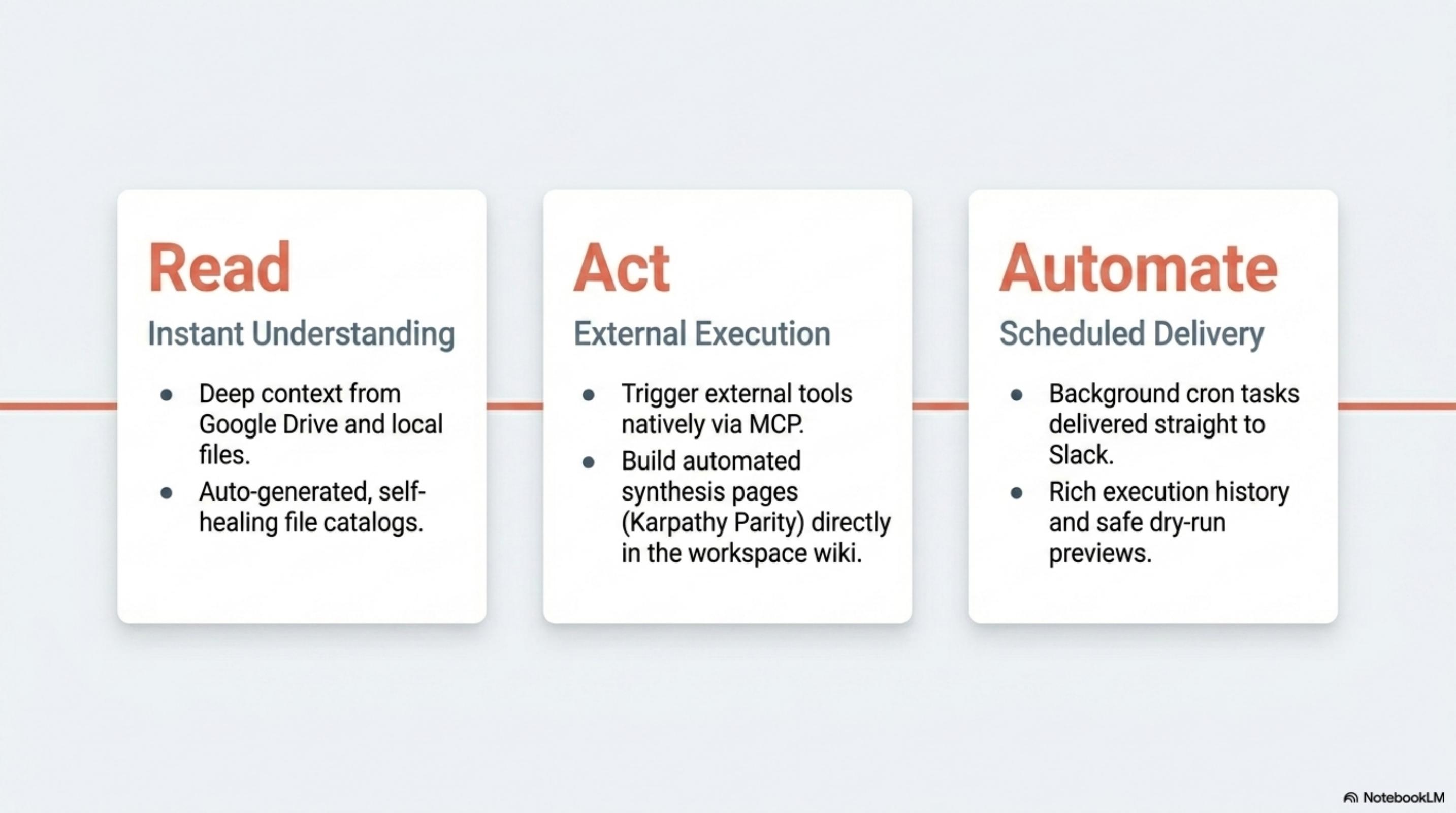

Deep context from Google Drive and local files via auto-generated, self-healing file catalogs — no stale caches, no shredded RAG chunks.

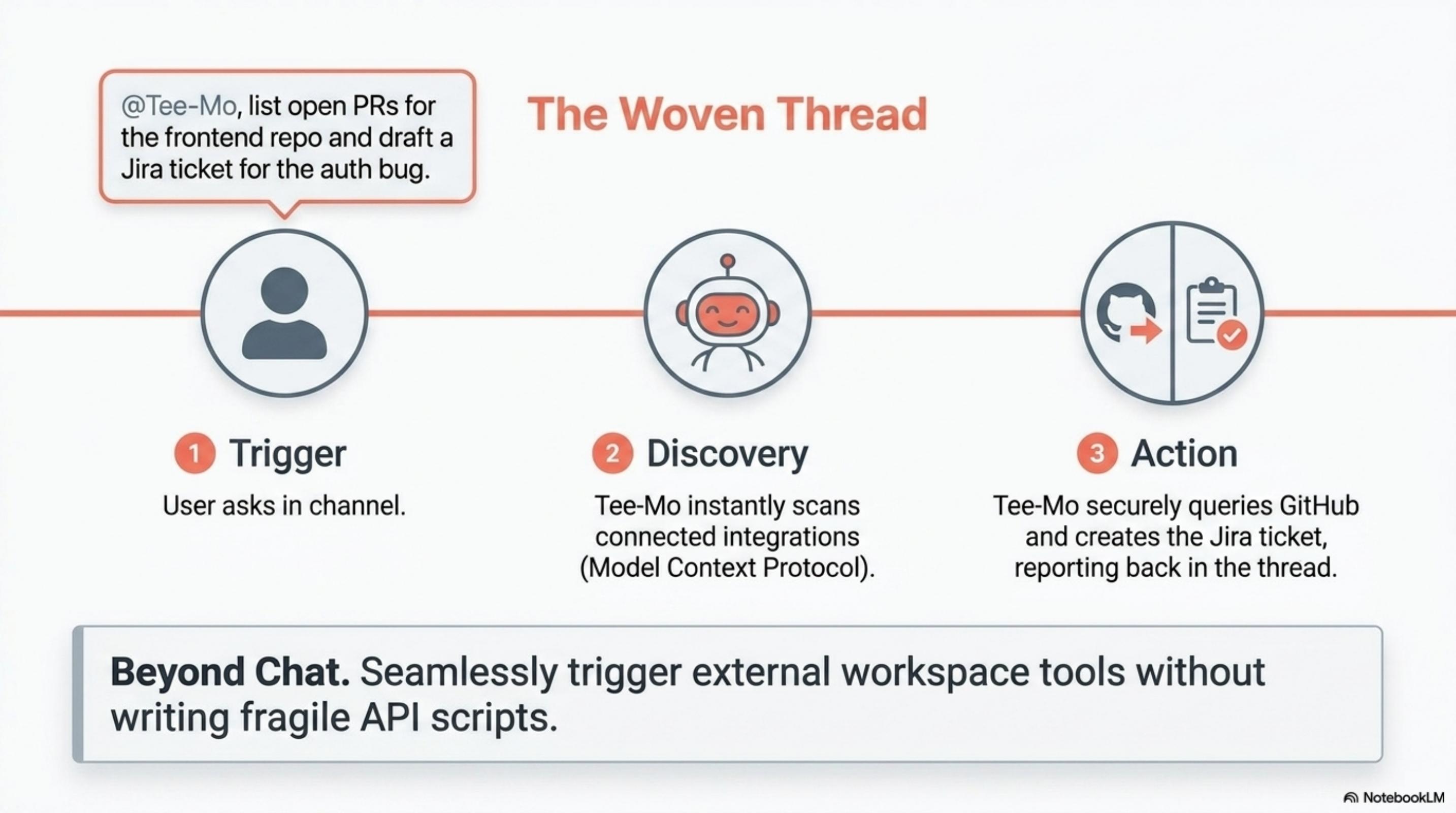

External execution via MCP — query GitHub, draft Jira tickets, build synthesis pages directly in the workspace wiki (Karpathy Parity).

Scheduled cron tasks deliver background intelligence straight to Slack, with rich execution history and safe dry-run previews.

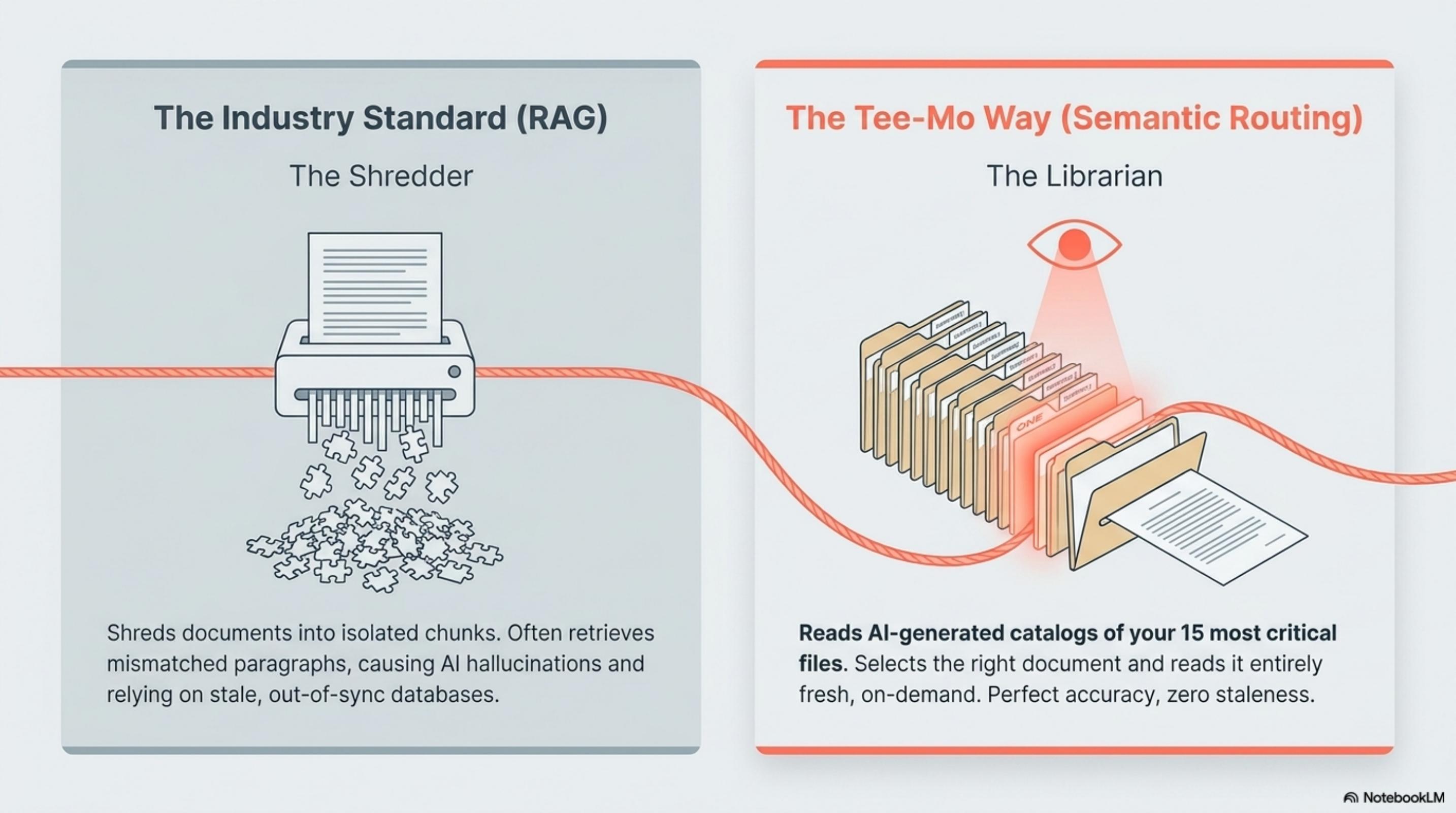

The "Librarian" model: read AI-generated catalogs of your 15 most critical files, select the right document, and read it entirely fresh. Perfect accuracy, zero staleness.

You hold the API keys; Tee-Mo is purely the engine. Every credential is encrypted at rest with AES-256-GCM. Zero in-process state.

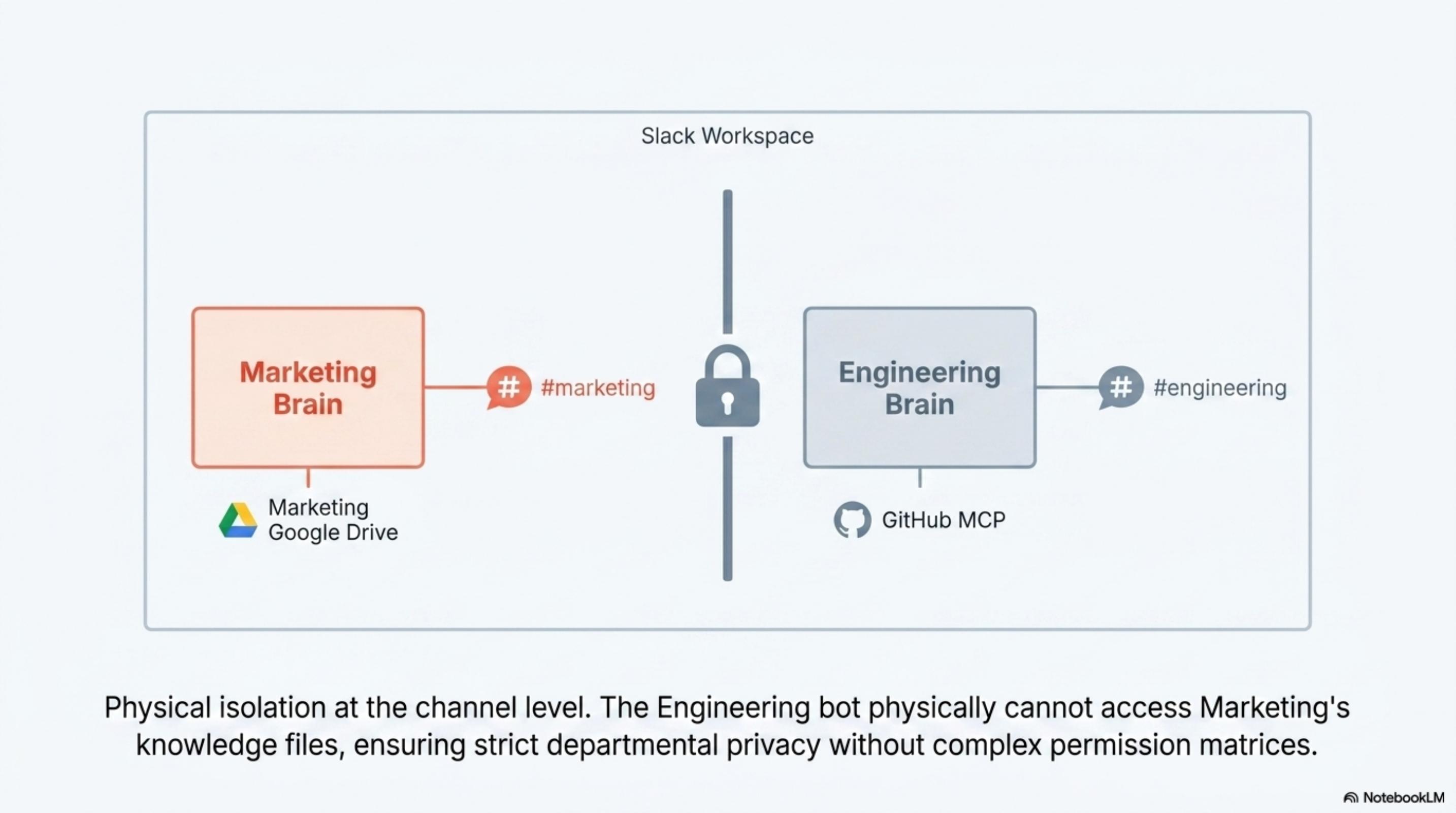

Each channel gets its own dedicated brain wired to its own documents and tools. Engineering's bot physically cannot reach Marketing's files.

Solution 3 of 3

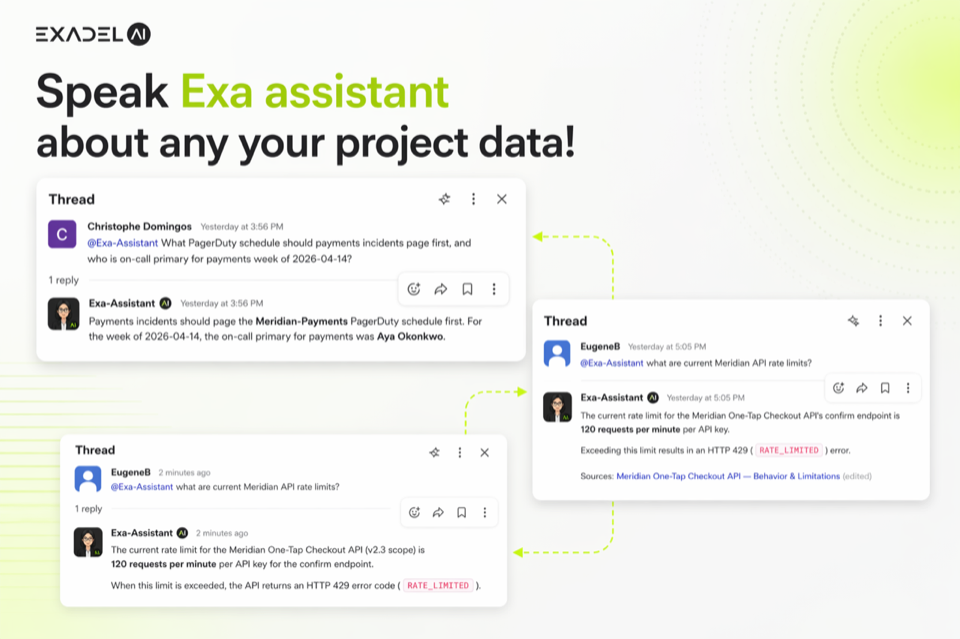

An AI-native workspace companion that lives inside Slack.

Drop documents into Google Drive — Exa parses, indexes, and syncs PDFs, Word docs, spreadsheets, and presentations. No engineering required.

Every project gets its own secure data boundary. Team A cannot see Team B's docs.

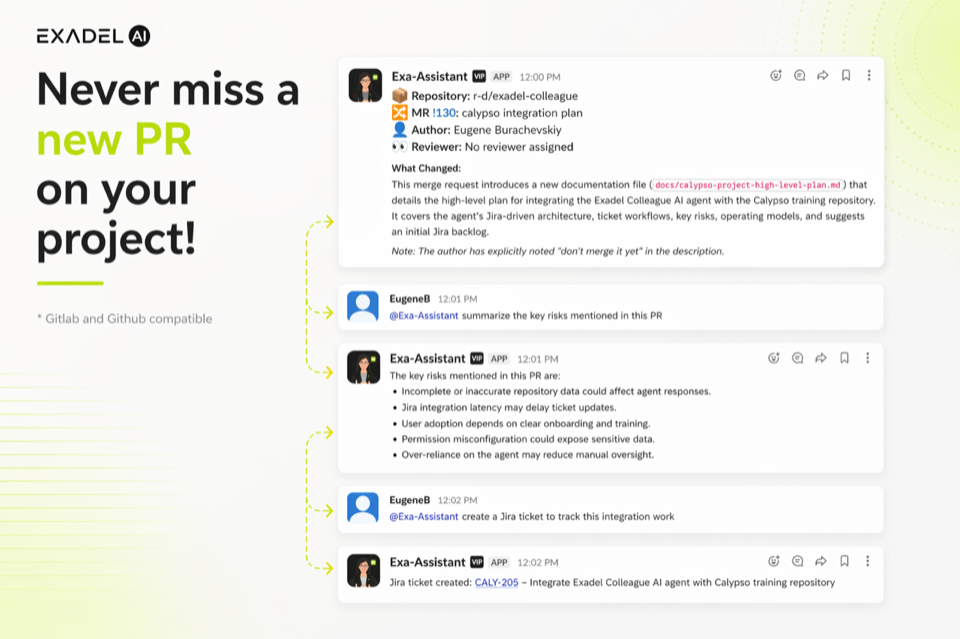

Real-time Slack alerts for PR reviews, approvals, and merge conflicts. Smart batching of CI/CD failures cuts noise without burying real breakage.

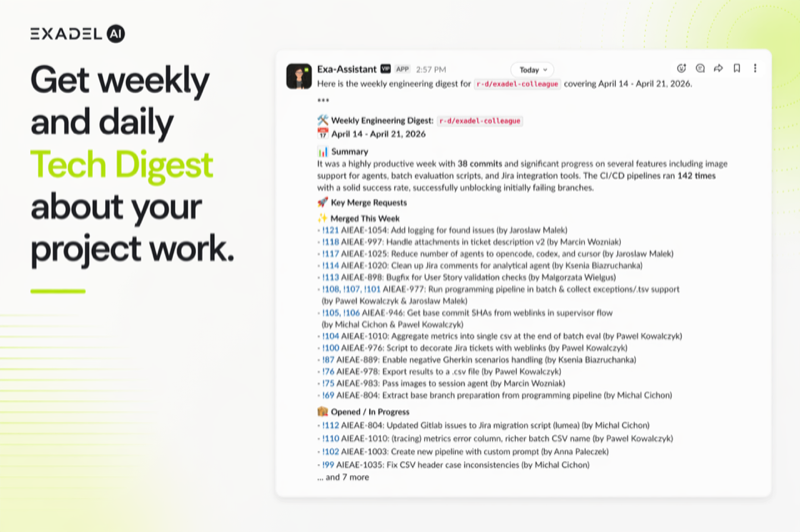

Morning summaries of merged PRs, open reviews, pipeline status, and document updates. Leadership gets clean velocity reports without status-meeting overhead.

Documents aren't a messy pile of text — they're parsed into summaries, concepts, entities, and cross-references, structured for LLM-native retrieval.

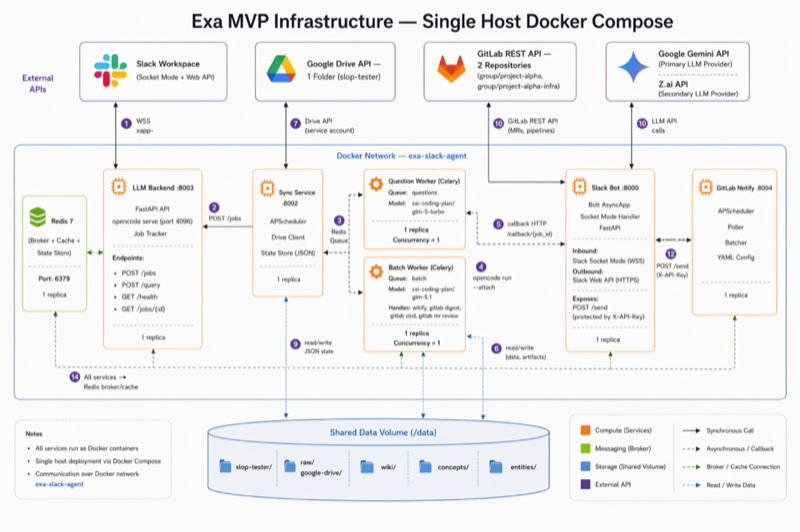

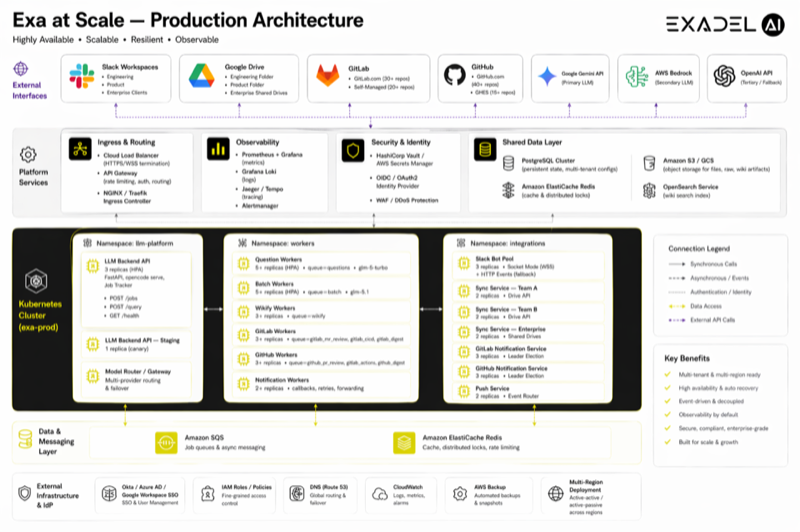

Slack bot, sync engine, LLM backend, question workers, batch processors — each a standalone service. Runs on a single VM (~$13/month on GCP) or scales to Kubernetes.

Three altitudes, one philosophy: structure beats magic.

| Axis | ClearGate | Tee-Mo | Exa |

|---|---|---|---|

| Layer | Agent orchestration | Workspace AI fabric | Org-wide knowledge brain |

| Surface | CLI + MCP | Slack + MCP | Slack + MCP |

| Knowledge model | Karpathy Wiki (index.md) | Karpathy Parity synthesis | LLM-Wiki structured graph |

| Trust model | Human-gated, Git-attributed | BYOK + zero-state | Per-project isolation |

| Status | Planning framework, ready to use | Sovereign engine, BYOK | Production-ready |